L Bertin et al. Clin Gastroenterol Hepatol 2026; 24: 1220-1231. Open Access! Beyond Eosinophils: Redefining the Spectrum of Esophageal Inflammatory Diseases Through an Immune-Centric Paradigm

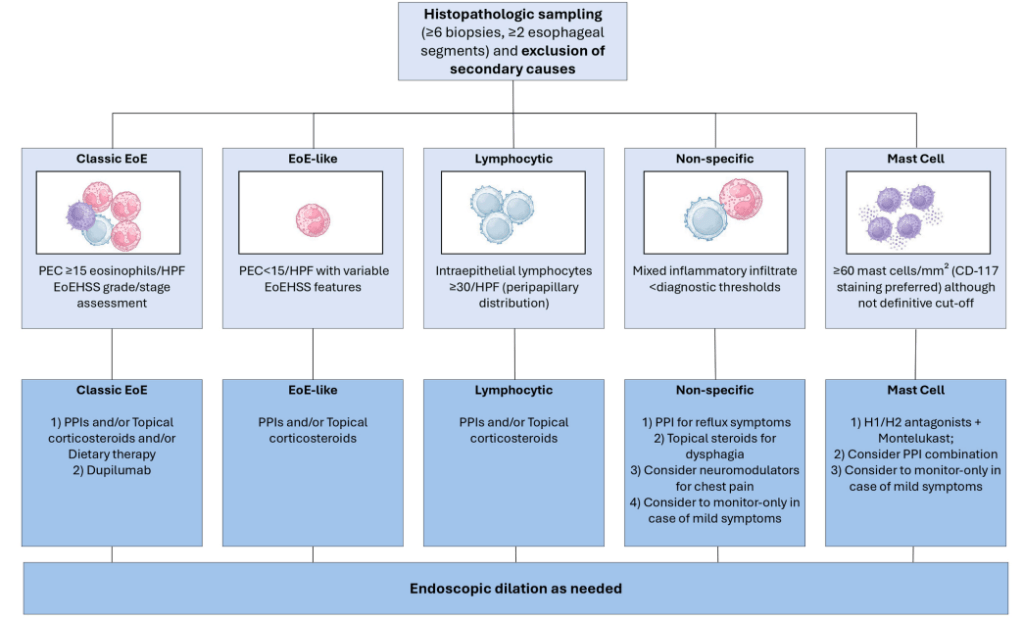

This narrative article reviews the four distinct variants that exist beyond classical EoE: EoE-like esophagitis (histologic EoE features with <15 eosinophils/hpf), lymphocytic esophagitis (LyE) (≥30 lymphocytes/hpf), nonspecific esophagitis (NsE) (mixed inflammatory infiltrates), and mast cell esophagitis (elevated intraepithelial mast cells).

Key points:

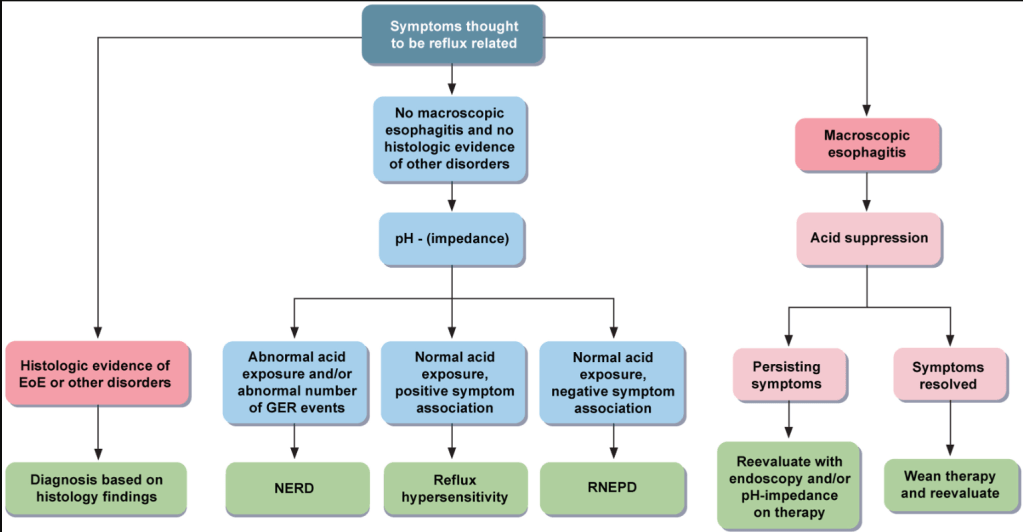

- Differential diagnosis: “Esophageal eosinophilia is not pathognomonic for EoE or EoE-like esophagitis. Alternative causes include GERD, achalasia and other esophageal motility disorders, infectious esophagitis, drug hypersensitivity reactions, connective tissue disorders (systemic sclerosis and dermatomyositis), hypereosinophilic syndrome, graft-versus-host disease, and Crohn’s disease with esophageal involvement.”

- “EIDs [esophageal inflammatory diseases] manifest with overlapping yet distinct clinical and endoscopic features.49 Dysphagia remains the most consistent symptom across the spectrum, although its prevalence varies significantly”

- “EoE-like esophagitis shows interleukin-13 pathway overlap with classical EoE and may progress to EoE (62.2% by 6 years)”

- “LyE treatment remains largely empirical, with PPIs showing variable efficacy (24%–86% improvement),53 with a recent multicenter study showing it achieved even lower histological (13% vs 48%) and symptomatic improvement (6.7% vs 43%).59 As a second-line therapy, topical steroids should be considered.60“

- “NsE lacks consensus management strategies, with empiric approaches based on presenting symptoms typically employed.16“

- “McE treatment evidence derives primarily from case reports showing response to combinations targeting mast cell activity.”

My take: Esophageal inflammatory disorders besides EoE are uncommon in the pediatric age group. This article provides a useful review and guidance.